Bringing your Chatbots to Life with Flow XO and ChatGPT

Unless you have been living underground for the last several months, you have heard all the hype around "generative AI" and specifically ChatGPT. ChatGPT is probably best known for its amazing creative writing abilities. It can write blog posts, short stories, and even rap battles from simple prompts. As an example, I asked our Flow XO ChatGPT chatbot to "introduce someone to ChatGPT who has never heard of generative AI" and this is what it said:

ChatGPT is a powerful AI tool that uses generative AI to create human-like text. Generative AI is a type of artificial intelligence that can create new content, such as text, images, and even music, without being explicitly programmed to do so. With ChatGPT, you can create chatbots that can understand natural language and respond to customer inquiries in real-time. This means that you can provide your customers with instant support, 24/7, without the need for human intervention. Additionally, ChatGPT can help you automate tasks such as appointment scheduling, order processing, and more, freeing up your team to focus on more important tasks. By using ChatGPT, you can improve your customer experience, increase productivity, and ultimately grow your business.

ChatGPT

But it isn't limited to only stuffy marketing text. I asked it to write a short poem about Flow XO:

Flow XO, the chatbot king,

No coding skills? No problem, bring

Your business to life with ease,

And chatbots that will surely please.

Whether or not this AI is ultimately a blessing or a curse for society remains to be seen, but it is indisputable that the technology will have a major impact on our world, and particularly how efficiently we can automate our businesses to communicate with our customers.

In this article, I will show you how to use ChatGPT and other Open AI technologies together with Flow XO to create compelling conversational experiences and to automate previously difficult tasks.

The many capabilities of generative AI

Generating content from prompts is an amazing capability of these language models, one with many business applications. But this technology can perform other very useful tasks as well, such as

- Language translation

- Content creation

- Text summarization

- Sentiment analysis

- Question answering

- Text classification

- Image generation

- Content moderation

Each of these can be extremely useful when building conversational experiences, and we'll cover several of them in this article. Let's get started!

Note: For more details on the specifics of how to use each of the Open AI tools, and links to useful Open AI resources, see our help document for the Open AI integration.

ChatGPT Quickstart

Just need AI powered Q&A on top of your own business data or helpdesk content?

See our Knowledge Base feature, which makes giving your chatbot infinite cosmic knowledge about your business instant and easy.

Need to have complete control of your ChatGPT prompts, but would like to incorporate dynamic, relevant content from your own business data? Here you go.

IMPORTANT: If you get an error when adding your Open AI API key to flow XO

If you see an error when connecting Open AI to Flow XO, it is because of this: https://help.openai.com/en/articles/6614457-why-am-i-getting-an-error-message-stating-that-i-ve-reached-my-usage-limit

Please try one of the resolutions from that link to get past the issue.

- Classification & Intent Detection - Need to analyze your users messages to determine what action your bot should be taking? See our Detect User Intent and Text Classification tasks.

- Translation - Need to translate your messages to another language? Or detect what language your users are using, and translate their messages into your native tongue? Our Translation task is the perfect tool.

- Summarization - Want to summarize a chat conversation? Or summarize a longer article into a short, simple paragraph? Try our summarization tasks.

Also check out our in depth tutorial on building a fully functional, multi-purpose customer service agent using ChatGPT here.

First, we'll set up the simplest possible ChatGPT chatbot using Flow XO, and then we'll improve it from there. Before we do that, you'll need both a Flow XO account and an Open AI account.

1. Get your free Flow XO account here

2. Get your free Open AI account here

Once you have an Open AI account, you will need to generate an API key. Here are the steps. If you prefer, you can open the completed flow in your account: https://flowxo.com/share/toolset-organic-7009

Be sure to copy your API key, because once you close the window you won't be able to access it again.

Be sure to copy your API key, because once you close the window you won't be able to access it again.

Put it somewhere safe, you'll need it later when connecting Open AI to Flow XO.

3. Create a new, blank, Flow XO Flow.

Choose "Blank Flow"

Choose "Blank Flow"

And for your trigger, choose "Catch All". The "Catch All" trigger will respond to any message not handled by another trigger. If you are working inside an existing Flow XO account that already has catch-all triggers set up, you may have to use a different kind of trigger. For more information on the basics of working with Flow XO, see here: https://flowxo.com/getting-started-with-flow-xo/

And for your trigger, choose "Catch All". The "Catch All" trigger will respond to any message not handled by another trigger. If you are working inside an existing Flow XO account that already has catch-all triggers set up, you may have to use a different kind of trigger. For more information on the basics of working with Flow XO, see here: https://flowxo.com/getting-started-with-flow-xo/

Your flow should now look something like this:

Your flow should now look something like this:

Right now, the flow won't do anything. We need to add some steps. Since we're building a ChatGPT bot, we'll want to add the Open AI task.

Right now, the flow won't do anything. We need to add some steps. Since we're building a ChatGPT bot, we'll want to add the Open AI task.

Paste the Open AI API key you received from earlier in the tutorial in the Open AI API Token field and click "Next"

Paste the Open AI API key you received from earlier in the tutorial in the Open AI API Token field and click "Next"

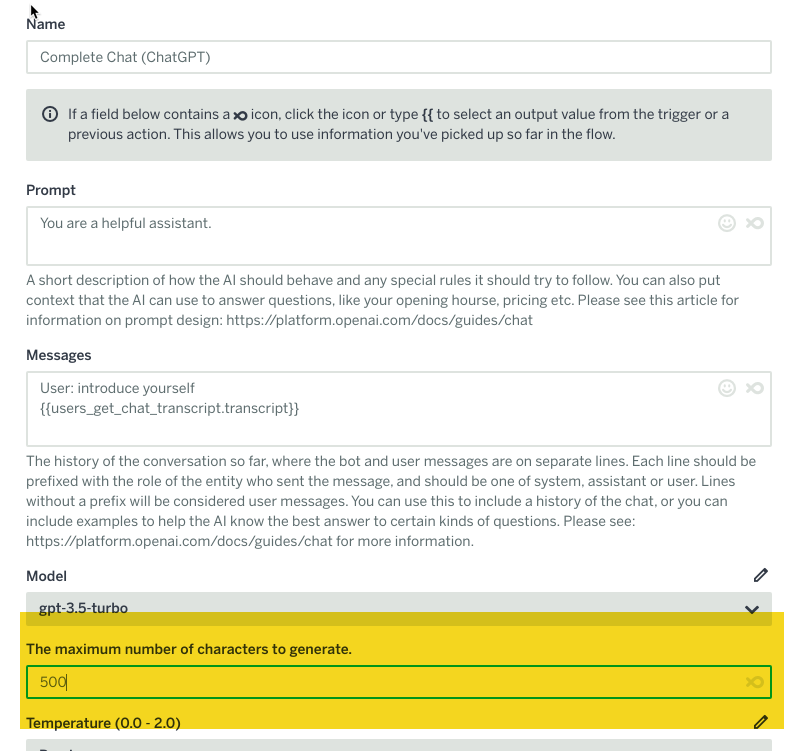

For the simplest possible ChatGPT chatbot, that relies only on ChatGPTs original training and does not keep a history of the conversation, all you need to do is insert the incoming message into the "Messages" field. Each line in this field is considered a single message from the user or the chatbot. Assistant messages start with an "Assistant: " prefix, and user messages start with a "User: " prefix.

To tell ChatGPT about the message from our user, type "User :" then insert a variable containing the message we received from the user:

Your configuration will now look like this:

Click "Save".

Click "Save".

Now we need to add a step so that the response from ChatGPT is sent back to the user:

Make sure to enable your flow in the top right hand corner:

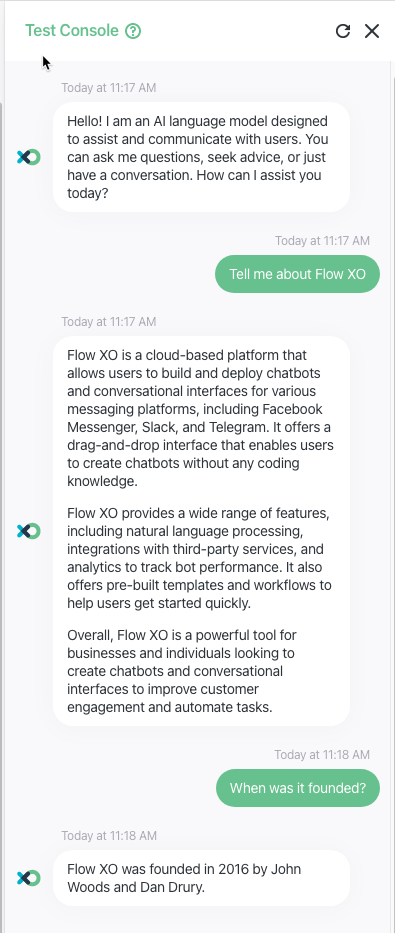

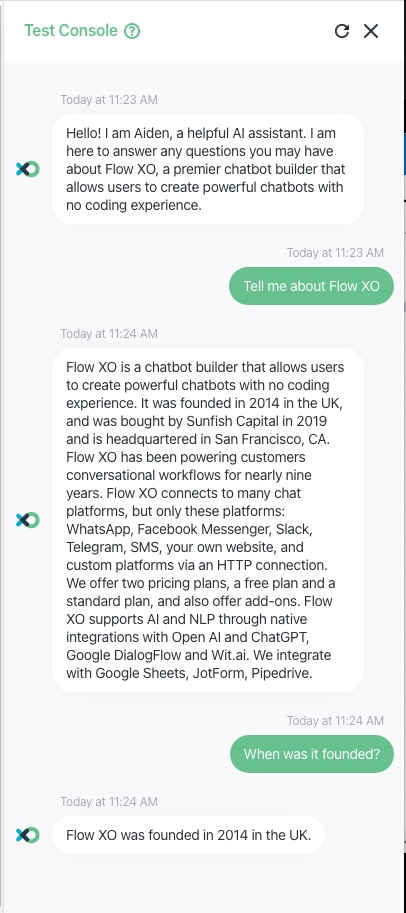

And now your ChatGPT chatbot is ready for testing. Open the test console by clicking the chat bubble in the lower right hand portion of the editor:

And now your ChatGPT chatbot is ready for testing. Open the test console by clicking the chat bubble in the lower right hand portion of the editor:

You are now chatting with Chat GPT! Ask it anything you like. Ask it to write you a poem about itself, or to make a list of the top 10 ways to make money with ChatGPT :)

You are now chatting with Chat GPT! Ask it anything you like. Ask it to write you a poem about itself, or to make a list of the top 10 ways to make money with ChatGPT :)

In the screenshare above, I asked it to tell me about Flow XO, and it gave a reasonable response. What's interesting about that is that we didn't prepare it with any knowledge of Flow XO whatsoever. Flow XO has been around on the internet for many years, and so it's already part of ChatGPT's training data. This is great! Well, it's kind of great.

One of the benefits and drawbacks of ChatGPT is that it will do its best to provide a reasonable answer to any question. To do that, it may make things up, use out of date information, or answer based information it knows about products similar to Flow XO that might not actually apply. Be very careful of this, and we'll discuss ways to mitigate this problem later.

Before you go wild chatting with ChatGPT, it has one big flaw. It doesn't keep any context about the conversation yet. Each new message is brand new. For example:

That's not how a real person would have a conversation unless they had a severe short term memory issue. Let's fix that.

That's not how a real person would have a conversation unless they had a severe short term memory issue. Let's fix that.

Flow XO has a utility function that lets you pull up a transcript of a chat with your user so far. We'll use that to give ChatGPT some memory.

Insert a step above the ChatGPT step, choose "More Actions" and select "Flow XO - Utilities" -> "User - Get Chat Transcript"

By default, the Get Chat Transcript step retrieves the last 50 messages. I recommend using somewhat less than this for a few reasons. First, ChatGPT is limited to 4096 tokens, and so if you try to send it a huge chat transcript, it can run out of characters and fail. Second, this step stores the chat transcript in the state of your interaction, so huge chat transcripts can slow things down for your users. It generally isn't necessary for ChatGPT to remember too far in the past, usually between 5 and 20 historical messages should be plenty.

By default, the Get Chat Transcript step retrieves the last 50 messages. I recommend using somewhat less than this for a few reasons. First, ChatGPT is limited to 4096 tokens, and so if you try to send it a huge chat transcript, it can run out of characters and fail. Second, this step stores the chat transcript in the state of your interaction, so huge chat transcripts can slow things down for your users. It generally isn't necessary for ChatGPT to remember too far in the past, usually between 5 and 20 historical messages should be plenty.

Now we need to insert the chat transcript into the "Messges" field in ChatGPT.

Click "Edit" on the ChatGPT step, and remove the content in the "Messages" field. Then on the first line type:

User: introduce yourself

On the next line, choose from the Flow XO variables the chat transcript we just loaded:

The last message from the user will always be the last message in the transcript. So we no longer have to pass in the message from the trigger. But why did you add "User: introduce yourself"?

The last message from the user will always be the last message in the transcript. So we no longer have to pass in the message from the trigger. But why did you add "User: introduce yourself"?

Taking a closer look at the conversation, you can see that the bot from ChatGPT was cut off. That's because we only allowed it to generate 100 tokens. To fix that, edit the Chat GPT task and change the Max Characters to 500. That should be plenty.

Taking a closer look at the conversation, you can see that the bot from ChatGPT was cut off. That's because we only allowed it to generate 100 tokens. To fix that, edit the Chat GPT task and change the Max Characters to 500. That should be plenty.

Nice! Chat GPT remembered what we were talking about this time, and answered accordingly. The bad news is that it's answer is completely made up. Flow XO was founded in 2014 by John Jackson. This highlights the danger of just throwing up a ChatGPT chatbot onto your website and hoping that it gives your customers good information. It will always be able to have a reasonable conversation, but left to it's own devices it is happy to fabricate information that sounds realistic.

Nice! Chat GPT remembered what we were talking about this time, and answered accordingly. The bad news is that it's answer is completely made up. Flow XO was founded in 2014 by John Jackson. This highlights the danger of just throwing up a ChatGPT chatbot onto your website and hoping that it gives your customers good information. It will always be able to have a reasonable conversation, but left to it's own devices it is happy to fabricate information that sounds realistic.

And try our conversation again:

And try our conversation again:

Total gibberish. If you are using ChatGPT to do creative writing, you will want to use the Creative setting. For most other tasks, Precise is appropriate. Insane is for demo purposes only, it's pretty much random garbage. You can choose other values as well, by clicking the pencil icon next to the temperature setting and putting in a number between 0 and 2, like 1.25 for a little extra randomness but not quite insanity.

Total gibberish. If you are using ChatGPT to do creative writing, you will want to use the Creative setting. For most other tasks, Precise is appropriate. Insane is for demo purposes only, it's pretty much random garbage. You can choose other values as well, by clicking the pencil icon next to the temperature setting and putting in a number between 0 and 2, like 1.25 for a little extra randomness but not quite insanity.

Using a "Label Loop" to keep the conversation contained and Flow XO costs down

The flow design we showed earlier is great for most use cases. However, if you want the chatbot to ONLY be aware of messages sent and received during a single session, i.e. you want the chat history that ChatGPT knows about to be reset for new conversations with the same user, or you just want to save on interactions in Flow XO, you can use a Label to create a conversation loop. Note that this technique will ONLY work for paid users, as free users have a loop limit of 5, which isn't nearly enough for most conversations. If you try this technique using a free account, the bot will simply stop responding after a few messages.

The basic structure is the same, but the difference is that we will add a label at the beginning of the flow, then after ChatGPT answers, we'll prompt the user for another question or ask them if they want to quit. If they put in a new question, we loop back to the beginning.

I won't do a complete walkthrough of this, the key points are:

1. When loading conversation history, use the "Message Received" timestamp from the trigger for the Start Time in the Get Chat Transcript step. This will limit the chat history to that interaction only.

2. You must give the user a way to end the conversation. Otherwise, it will go on forever. The easiest way to do this is when asking the user for another message, give them a button to indicate they are done, then only loop to the beginning if they don't click that button. Here's a sample flow that demonstrates this technique. You can load it up in your account and study it to see how this is done:

An Open AI chatbot using labels and looping: https://flowxo.com/share/fault-array-2144

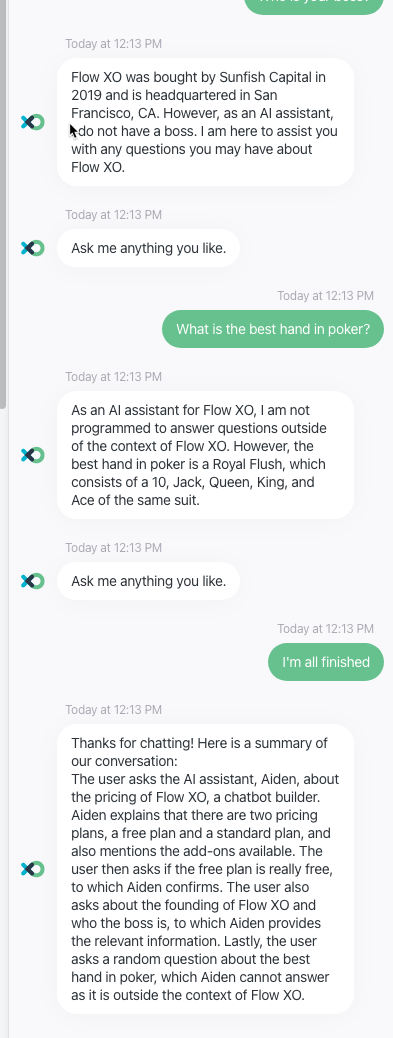

The loop sample does have a nice bonus feature, however, which is that because the flow has a beginning and and end you can generate a chat summary when the conversation is complete. Show it to your user, or send it to your support team, there are many uses for this and it also demonstrates another nice feature of the Open AI toolkit.

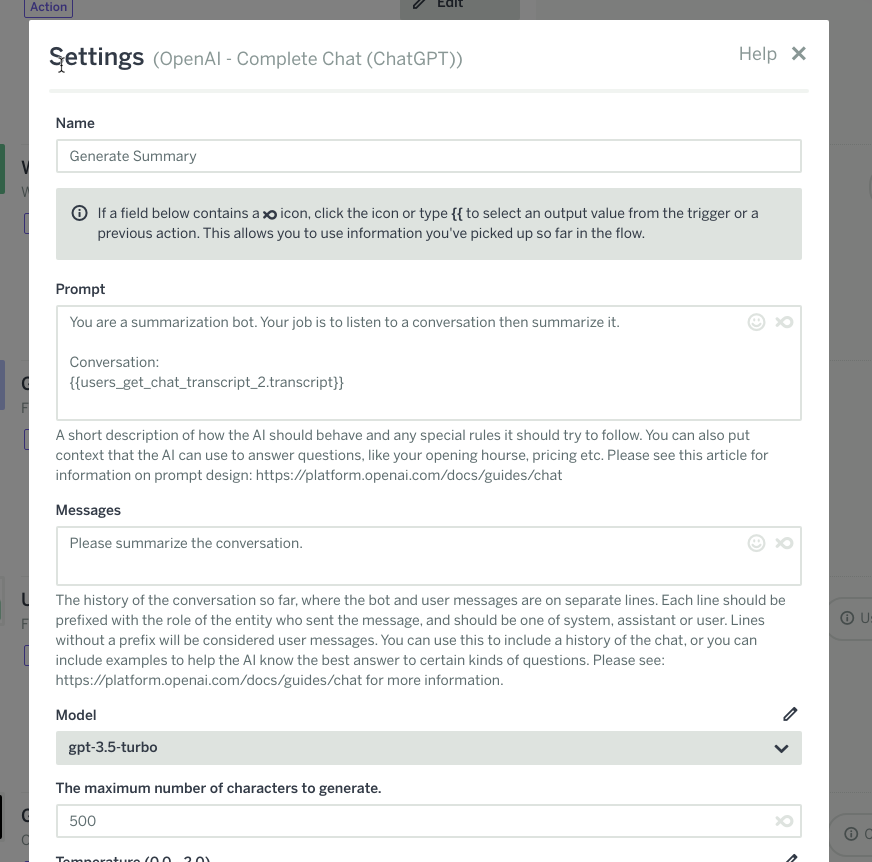

Summarizing chats, or other long content

In the "Using a Loop" sample, the flow ends by telling the user a summary of the conversation. That segment of the flow looks like this:

We used the "Complete Chat (ChatGPT)" api for this task. As I mentioned, there is another Open AI task that can be used for these kinds of tasks, "Complete Text" - but in general, you want to try to use "Complete Chat (ChatGPT)" instead if it produces reliable output for your use case. The reason is that it is faster, 10x cheaper, and according to Open AI performs just as well. The only trick is that you have to formulate each task as if it were a chat. You can see how this is done in the screenshot above.

We used the "Complete Chat (ChatGPT)" api for this task. As I mentioned, there is another Open AI task that can be used for these kinds of tasks, "Complete Text" - but in general, you want to try to use "Complete Chat (ChatGPT)" instead if it produces reliable output for your use case. The reason is that it is faster, 10x cheaper, and according to Open AI performs just as well. The only trick is that you have to formulate each task as if it were a chat. You can see how this is done in the screenshot above.

Using ChatGPT internally for generating copy for your conversational flows, or for anything else!

A technique that we use here at Flow XO, and you will likely want to implement as well in your own business, is to create a bot you can use internally to have ChatGPT generate helpful text that you can paste into your flows, or use anywhere in your marketing. At Flow XO, we set up an internal bot on a dedicated Slack channel that we don't give out to users that lets us generate images, give custom prompts to ChatGPT to generate different kinds of text, summarize long articles and more. It's a wonderful tool, and a lot of fun to have around - we highly recommend you build one for yourself and host it on whatever channel your team uses most. You can use all the techniques in this article to make it very flexible and powerful.

Just need AI powered Q&A on top of your own business data or helpdesk content?

See our Knowledge Base feature, which makes giving your chatbot infinite cosmic knowledge about your business instant and easy.

Need to have complete control of your ChatGPT prompts, but would like to incorporate dynamic, relevant content from your own business data? Here you go.

Just need AI powered Q&A on top of your own business data or helpdesk content?

See our Knowledge Base feature, which makes giving your chatbot infinite cosmic knowledge about your business instant and easy.

Need to have complete control of your ChatGPT prompts, but would like to incorporate dynamic, relevant content from your own business data? Here you go.

A Tale of Two (or Three) Models - Generate Chat vs Generate Text

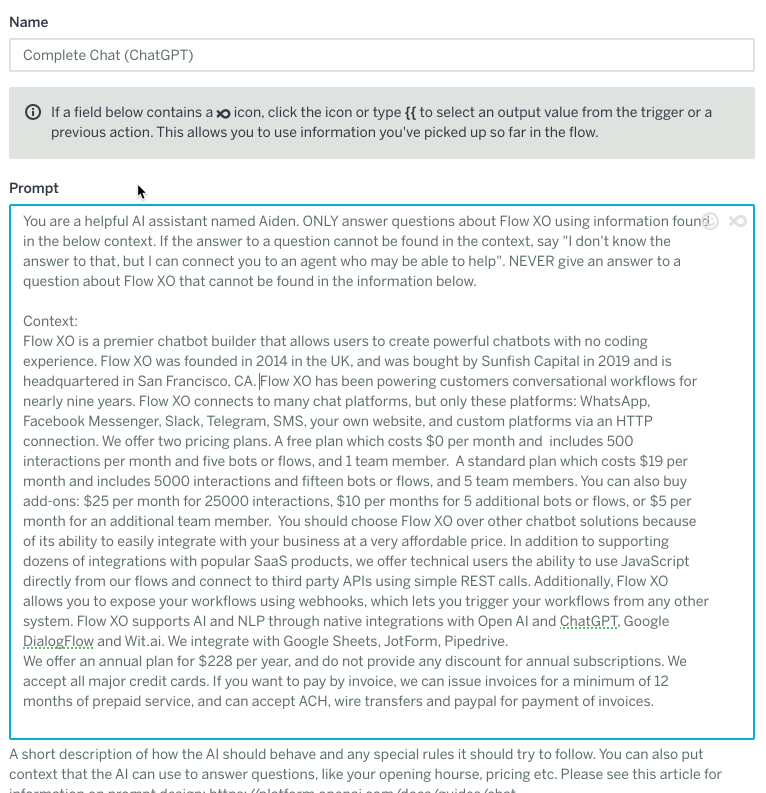

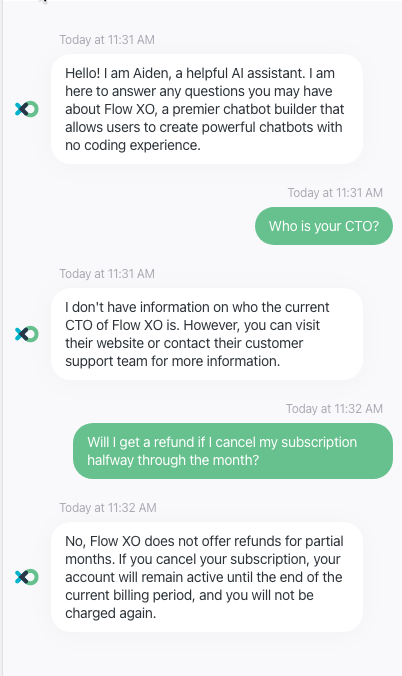

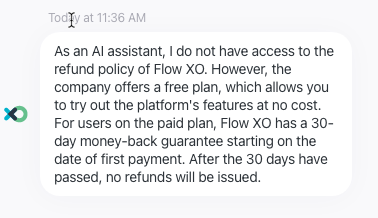

I mentioned earlier that ChatGPT is particularly prone to making up answers. The usual solution for this is often to be very explicit in the prompt, telling the bot not to make stuff up. Neither ChatGPT (Generate Chat) nor GPT-3 (Generate Text) follow those instructions perfectly. However, Generate Text (GPT-3) seems to be better at following instructions. Here is a sample chat with ChatGPT (Generate Chat)

This is a very interesting conversation. The answer about the refund policy is flat wrong. Interestingly, and somewhat amazingly, the questions about writing to a local database and using a database proxy, both very technical questions, are completely accurate, without us giving that information to the model in the prompt. Then it goes wrong again, as ChatGPT just invents URLs to our documentation. What's fascinating is that it correctly guesses the format of the URLs for our helpdesk, but they are just dead links. Pure fiction.

This is a very interesting conversation. The answer about the refund policy is flat wrong. Interestingly, and somewhat amazingly, the questions about writing to a local database and using a database proxy, both very technical questions, are completely accurate, without us giving that information to the model in the prompt. Then it goes wrong again, as ChatGPT just invents URLs to our documentation. What's fascinating is that it correctly guesses the format of the URLs for our helpdesk, but they are just dead links. Pure fiction.

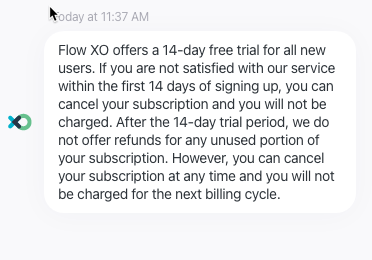

Here is the exact same chat (with the exact same prompt and examples) using GPT-3 (Generate Text)

In the prompt of both bots, we told the AI not to answer what it doesn't know for sure from the context we gave it. You can see this second version using Generate Text did a much better job of following that order. You can also see there are trade-offs. While it was more compliant about not answering questions did not have answers in the prompt, it also didn't give some correct answers about the database integration that would be helpful to the user. And while it mostly avoided inventing answers like we asked to to, one exception is when we asked for the documentation for WhatsApp. Here, it just made up a broken link. It did, however, refuse to make up a link for customer.io. Because these models are black boxes, it can be hard to know why it made up a link for one question, and didn't do the same thing for a nearly identical question.

Note that in addition to how well they follow prompts, the models differ in how they recover from mistakes as well. Here is what happened when I told ChatGPT that the link was broken:

It just lied again and again, making up a slightly different URL each time. Very unhelpful.

Now, when I asked GPT-3 about the broken link, it did better:

The bottom line here is that as powerful as these models are, they can't be fully trusted to represent your business right out of the box. You must thoroughly test your solutionwith multiple models, asking your bot everything you think your customers might ask, and then continuously review customer chats over time to enhance your prompts with information that prevents the bot from making up answers to critical questions. Try to use "Complete Chat" over "Complete Text" when your testing shows adequate results - Complete Chat, as we have mentioned, is cheaper, it's faster, and it probably will get the most attention from OpenAI, so it may improve on the ability to curb its lying (called "hallucinations") in the future. But if you are having trouble with your bot giving out wrong information using Complete Chat, try Complete Text, and try re-wording the prompts to set rules for it to follow, or try adding better examples. Our testing shows that Complete Text follows instructions and pays more attention to examples than Complete Chat, which seems optimized to seem as human as possible at all costs.

GPT-4

Since this article was originally written, Open AI has released GPT-4. GPT-4 is revolutionary yet again, able to pass the bar exam in the 90% percentile, create websites from cocktail napkin drawings, and other feats of strength and brilliance. At the time of this writing, GPT-4 is still in beta and requires an invite. (Sign up on the waitlist now if you haven't already). In our tests, GPT-4 did a superior job to both ChatGPT and GPT-3 in terms of answering accurately, honestly, and not fabricating information. It was only model of the three not to make up documentation links at all, and answered thoughtfully even when it wasn't sure of the answer.

For example, although it had no information about our database integration, it does know about our HTTP integration from the context (same as the tests with the other models). Instead of guessing based on its training data (ChatGPT, dangerous), or saying simply "I don't know" (GPT-3), GPT-4 gave the best answer it could, which was accurate and honest:

At the moment, however, GPT-4 is very expensive compared to either ChatGPT or GPT-3. So while it is likely the best possible model to use, in terms of quality, and a very viable option if you have a low volume chatbot, you will likely still want to do your best to get adequate results from ChatGPT or, in a pinch, GPT-3, and resort to GPT-4 only when quality is far more important than cost, or your volume is low and the overall cost would not be very high.

At the moment, however, GPT-4 is very expensive compared to either ChatGPT or GPT-3. So while it is likely the best possible model to use, in terms of quality, and a very viable option if you have a low volume chatbot, you will likely still want to do your best to get adequate results from ChatGPT or, in a pinch, GPT-3, and resort to GPT-4 only when quality is far more important than cost, or your volume is low and the overall cost would not be very high.

Using "Complete Text (GPT-3)" to create your chatbot:

https://flowxo.com/share/monitoring-analyzer-2509

So how do you use Complete Text instead of Complete Chat? It's actually very similar, and very easy. Here's how you configure Complete Text to do the exact same as our previous Complete Chat examples:

The most important thing to note here is the last line, "Assistant: "

The most important thing to note here is the last line, "Assistant: "

The Complete Text task works by filling in the most likely text that should follow a prompt. Unlike Complete Chat, Complete Text doesn't know anything about a "conversation" with the user. It's just trying to complete your text, and so you want to provide the "Assistant:" label so that it knows it's time for the bot to provide and answer.

Everything else works exactly the same as ChatGPT.

Interactivity - Making the AI interact with your workflows

Here is the sample we will be working with so you can play along: https://flowxo.com/share/monitoring-analyzer-2509. This is the same sample from the previous section that demonstrates using Complete Text for your chatbot, by the way, so if you just installed that no need to install it again.

You may have noticed in some of the sample conversations above that the chatbot told the user they would transfer them to an agent. Well great! Except - how is it supposed to do that? All these kinds of AI can do is generate text. There is no aspect of the Open AI API that lets the bot interact with your workflow. This is probably a good thing. If you paid any attention to the insane behavior of Microsoft's Bing chatbot that was all over the news for awhile, then you don't want these things given the capability to push buttons or pull levers on their own, the world would quickly descend into chaos :)

But not to worry, there are different ways to make your bots more useful and interactive. Let's take the transfer to agent example.

The simplest way to accomplish a human handoff from the AI is to add a step just after the AI generates a response with a filter that checks for certain keywords in the response text and react accordingly. For example, ChatGPT is pretty consistent in how it phrases things. You could check for the word "agent" or phrase "transfer you" or similar, and start a live chat if the AI generates a response with those keywords. A more sophisticated way to do the same thing is to create a second task using Open AI that will try to guess what your user wants from their question, and then react accordingly. In Natural Language Processing (NLP) this is often called "Intent Detection". It is also more broadly an application of "Text Classification". The idea is that the language model can take a bit of text, usually a question or command from your user, and categorize it into one of a list of possible alternatives you provide.

For example, you could ask the AI to classify the user question into one of the following intents: agent, sales, marketing, pricing, unknown. It will then (usually) spit back the single word category that you can use in your filtering logic later in the flow. This is extremely powerful.

Here again we observe a difference between Generate Chat (ChatGPT) and Generate Text. We find that they work equally as well in most cases, but Generate Chat (ChatGPT) will sometimes refuse to classify the intent because it doesn't like the users input. Generate Text much more reliably produces a result, without trying to validate or police the user input. Again, neither is necessarily better - it all depends on your specific needs. I'll discuss this in more detail when we talk about Command Interpretation later in this article.

If you haven't already loaded up the sample for this section, go ahead and do so now and configure your API key. This example is a fun one - it includes intent detection, of course, to help with human handoff, and also demonstrates the ability to make AI generated images when the user requests one.

Let's start with the simplest form of Human Handoff - i.e. transferring to an agent.

Simply add a new "Start Live Chat" step after the GPT text generation step. Use a filter to only activate this step if the bots response included the text "transfer you" or "connect you to". Testing will be required to find the exact right phrases to include in your filter for your use case.

Of note on this filter page is that you want to make sure to use "OR" to combine the filters, since ANY of the phrases should trigger live chat. You also want to make sure to click "Also stop rest of flow if conditions are met". Once a live chat is initiated, you don't want the flow to keep running, the humans are in charge now.

It's that simple.

Intent Detection

We will be showing the manual method of doing intent detection in the section below. However, we have a much simpler "shortcut" action for intent detection we included in our Open AI integration. You can read about that here: https://support.flowxo.com/article/296-open-ai#intent

It's not always possible or desirable to determine what action to take based on the bots response, however. The true need of the customer is often specified in their question, and often but not always directly reflected in the Open AI response. Because of this, we'll use a technique called intent detection. In intent detection, the AI evaluates a users question or command and tries to put it into a category based on the underlying meaning of their statement. The possible categories you will allow should usually be limited to ones your workflow logic (aka Flow) is designed to handle. We will use the same tools we use to generate chat responses to perform the intent detection. Here is how you configure a "Generate Chat" step to do this:

NOTE: If you want a sample flow concerning JUST intent detection to keep things simple, install this: https://flowxo.com/share/organic-neutral-5907

in this example, we are using Generate Chat (ChatGPT). You can also use Generate Text, but it's more expensive, so use ChatGPT if you can get good performance out of it based on your tests.

You'll notice we provide nearly no examples. This highlights the power of AI, that it works without any additional training with your specific examples. Here's a transcript trying it out just like it is:

You can see the model did a pretty good job of guessing the user intent. Once the user question is simplified to a simple category, you can easily use that category to execute a flow designed to process user questions in that exact category. You can see that at work in the sample.

There is one small problem though - depending on how you asked about Telegram, Open AI classified it as both an "integration" and a "platform". Maybe it can do better. Let's tweak the prompt:

Notice we show the AI that even if it's asked a question with "integrate" and a "platform" (notice the list of example platforms in the prompt), it should categorize the intent as "platform". Let's test it out. We also tweaked the bot to use both Generate Chat (ChatGPT) and Generate Text so we could compare the output from both models:

Notice we show the AI that even if it's asked a question with "integrate" and a "platform" (notice the list of example platforms in the prompt), it should categorize the intent as "platform". Let's test it out. We also tweaked the bot to use both Generate Chat (ChatGPT) and Generate Text so we could compare the output from both models:

You can see that ChatGPT still reported "integrations" when the word "integrate" was used in the question, but GPT-3 (Complete Text) did correctly identify that it was a platform.

Hurray for the more expensive model, but...

This time, ChatGPT was correct, and GPT-3 got it wrong.

This time, ChatGPT was correct, and GPT-3 got it wrong.

Again, emphasizing testing, testing, testing before you choose the model for you use case. In reality, the distinction between platform and integration is probably TOO subtle to expect the model to figure out on its own. In a case like this, I recommend using many more examples in your prompt if you need to distinguish between concepts so similar. Even so, it does a pretty darn good job on its own:

The models disagreed on Notion - but I'm not sure if I could answer that one correctly myself - Notion is pretty much a platform these days. I give both models a gold star here.

I tried to be sneaky, but the model did a good job. "Do you work on my website" is probably a question about services - do we, as a company, perform website development services. And the model correctly guessed that. The question "Does this work on my website" is probably referring to if your website is a platform the bot supports, and again, Open AI got it right. Well done.

I tried to be sneaky, but the model did a good job. "Do you work on my website" is probably a question about services - do we, as a company, perform website development services. And the model correctly guessed that. The question "Does this work on my website" is probably referring to if your website is a platform the bot supports, and again, Open AI got it right. Well done.

Now let's get back to the bigger example: using intent detection to help with human handoff and other kinds of interactivity.

We will insert an intent detection step in our chatbot just after the chatbot response is sent to the user, like so:

And here is the prompt:

We're giving it a LOT of examples, some of which might not have been necessary. But more examples can't hurt (unless you are running short on tokens) and you will determine the right number examples through your testing. Now what do we do with these intents?

We're giving it a LOT of examples, some of which might not have been necessary. But more examples can't hurt (unless you are running short on tokens) and you will determine the right number examples through your testing. Now what do we do with these intents?

Let's take human handoff / transfer to an agent. We want to initiate a live agent chat when the detected intent is "agent". This is very easy. We just add a Start Live Chat step, and put this in the filter:

Don't forget to stop the flow when the filter condition is met (i.e. the intent is agent).

You probably want your bot to do other stuff though, besides just transfer your users to agents. In this example, we're asking the bot to generate images for us using the DALL-E endpoint from OpenAI. In the prompt for the Intent Detector, notice the examples that look like "draw me a picture of.." and "a photo of..." In those example phrases we put into the prompt, pay special attention to how we worded the expected output. Instead of just showing the bot we want the intent to be 'image' we ALSO include what the image should be of as part of the intent.

For example, "Can you draw me a picture of a snake" becomes "image of a snake" instead of simply "image"

When an intent starts with "image", we activate this portion of the flow:

The filters look like this:

The filters look like this:

The prompt for the Image Generation task from Open AI is very simple:

The prompt for the Image Generation task from Open AI is very simple:

Since we used example phrases in our prompt to teach ChatGPT to say our intent is "image of <some image description>" , we can just put the entire detected intent into the prompt for the image generator and get a good result.

Since we used example phrases in our prompt to teach ChatGPT to say our intent is "image of <some image description>" , we can just put the entire detected intent into the prompt for the image generator and get a good result.

Here's what this all looks like in action:

Pretty cool huh?

You can use this technique not just to draw pictures and send your users to live chat, but to provide relevant links to your documentation, or kick off entire flows.

One especially useful technique is to use intent detection to send your user to another topic specific ChatGPT bot that has a prompt designed specifically for that topic.

Here's an example of just that. You need to install and enable both flows for the sample to work. Also don't forget to disable your other flows from the earlier examples or you will get too many bots answering at once.

This is what the main flow looks like:

In this flow, ChatGPT does not generate a text response for the user. It ONLY detects the intent of the users question and then uses that intent to decide which other logic to run. In this case the flow can do one of three things depending on the detected intent - send the user to live chat with an agent, trigger a pricing Q&A bot (also powered by ChatGPT), or repeat what the intent was and explain that it can't be handled at this time. Usually you'd have a flow for every intent you are detecting, but in the sample we're just repeating the intent back.

This example is mostly self explanatory based on what you've learned so far, but I do want to point out one important aspect. The "trigger a flow" step sends the users original question to the Pricing Q&A flow as metadata.

Then in the Pricing Q&A flow, we configure the trigger (a New Trigger) like this: (see here for info about triggering flows from other flows: https://support.flowxo.com/article/273-triggering-flows-from-other-flows)

Then in the Pricing Q&A flow, we configure the trigger (a New Trigger) like this: (see here for info about triggering flows from other flows: https://support.flowxo.com/article/273-triggering-flows-from-other-flows)

And we configure ChatGPT like this:

And we configure ChatGPT like this:

Note that this is not the only way to implement this pattern. Instead of passing the query around from flow to flow as metadata, in the Pricing Q&A flow you could load the chat transcript instead, like in our earlier ChatGPT examples. This technique avoids having to pass the query from one flow to another, AND it allows the bot to keep a conversation history.

Command Interpretation

For our final concept for this article, we're going to discuss using OpenAI/ChatGPT to interpret commands from your user. This is almost exactly the same as intent detection, but with the added capability of allowing users to specify "arguments". For example, "send me a reminder at noon to take over the world". ChatGPT and GPT-3 can easily split this command up into parts your system can act on: the main command is "send me a reminder", and arguments are when (at noon) and what (to take over the world).

Here are the sample flows. Install them both, and remember to disable previous examples:

These examples are VERY similar to the intent detection samples, but are interesting in some unique ways. They demonstrate:

*generating structured text, not just free text

*differences between Generate Chat and Generate Text

*Using a Flow XO Code action to the result from the OpenAI responses.

Let's start with the structured text. Here is the prompt for the Generate Text version of the command interpreter:

Notice the sample phrases in the prompt. They are showing the AI how to take free text typed by the user, and then split it up into a command name followed by various arguments formatted as JSON.

We are structuring the command like this: / command-name|arguments-as-json

So, "Can you set a reminder for 2pm" becomes /send_reminder|{"when":"2pm"}

One thing to notice is the decision to separate the command name and the arguments with a "|" (pipe) character. Open AI doesn't care about the format, we are constructing the examples this way so we can easily use a Flow XO text step or a code step to split the command name and the arguments.

Let's see how this works, and how Generate Chat and Generate Text compare in this task:

Output labeled with "Chat" use Complete Chat (ChatGPT). Output with GPT3 use Complete Text.

So far so good. Both models have identical and correct output. But check this out:

Before lunch" isn't really a quantifiable time. Complete Chat (ChatGPT) therefore includes the 'before lunch' part in the message, and excludes the "when". GPT3, however, adds "before lunch" as then "when" argument. In these next examples, the models have much different behavior:

ChatGPT is trying to save humanity from you. GPT-3 is happy to help you with your evil plans.

ChatGPT is trying to save humanity from you. GPT-3 is happy to help you with your evil plans.

If you paid close attention to the sample phrases in our prompt, you'll have noticed that our example was "set timezone to UTC" and our expected output was "/set_timezone|{'timezone':'Etc/UTC'}". In other words, we asked the model to convert a shorthand timezone to a full, standardized Iana timezone specification, and it did a great job of following that example. But what about this?

If you paid close attention to the sample phrases in our prompt, you'll have noticed that our example was "set timezone to UTC" and our expected output was "/set_timezone|{'timezone':'Etc/UTC'}". In other words, we asked the model to convert a shorthand timezone to a full, standardized Iana timezone specification, and it did a great job of following that example. But what about this?

And as usual, ChatGPT will challenge the user on bogus input, and GPT-3 is happy to play along. One more:

And as usual, ChatGPT will challenge the user on bogus input, and GPT-3 is happy to play along. One more:

And yet again, ChatGPT won't do the impossible, setting a reminder for yesterday. GPT-3 does what it's told. PIck which style works best for your application, but just don't forget "Complete Chat (ChatGPT)" is faster and cheaper, and might be worth some extra effort to deal with the extra variation in responses when it doesn't like your users inputs.

And yet again, ChatGPT won't do the impossible, setting a reminder for yesterday. GPT-3 does what it's told. PIck which style works best for your application, but just don't forget "Complete Chat (ChatGPT)" is faster and cheaper, and might be worth some extra effort to deal with the extra variation in responses when it doesn't like your users inputs.

Now that we have our bot generating structured commands, we need to do something with the commands. The sample flow uses a Text step to split the command name from the arguments, and then a Code step to parse the arguments for later use. You could do everything in a Code step if you wanted as well.

The "Split Text" will give use the command name, and the arguments.

Then, we trigger a flow to actually handle the command, passing the command name as the 'trigger phrase' and the command arguments as metadata.

See: https://support.flowxo.com/article/273-triggering-flows-from-other-flows for more information on triggering flows.

The triggered flow, "Reminder Command" in this case, is responsible for actually executing the command. In the sample, we are parsing the arguments using a Code block and using a Wait Until a Date task to schedule a reminder.

Here is what the trigger for the command handler flow looks like. Note the trigger phrase is just the command name.

Here we use a Code step to parse the arguments. If you only allow one argument to your commands, you don't need to do this. But as you may often want multiple arguments, this is a great, very flexible way to interpret any number of command arguments.

Next, we use value of the "when" argument (such as, at 2pm) to set the reminder. The "Wait until a Date" task from Flow XO already accepts natural language relative times, so it can handle "tomorrow at 9am" or "next Monday at noon" without further processing.

Trying it out, it actually works!

Install the samples and try it out for yourself.

Open AI has more tools in its toolbelt as well - such as the ability to edit/transform/fix text and do things like translating text to different languages, fixing grammar (excellent if your not a native English speaker and you want to polish your bot messages to make them sound more natural in English), and many other tasks. Here's a bonus sample: https://flowxo.com/share/sky-blue-9213

That's it for now. We have just scratched the surface of what these powerful APIs can do. Just remember:

* ChatGPT (Complete Chat) is the cheapest (by 10x) and fastest of the Open AI tools, and can be used for most tasks

* Complete Chat and Complete Text do not always behave the same

* Both models are natural born liars - plenty of testing with your bot, and careful prompt engineering, are required to make a conversational bot you trust with your users

* Be very careful, these toys are ADDICTIVE

As always, thanks for reading, and if you have any questions/issues feel free to reach out to support@flowxo.com. We also offer professional services if you want to hire us to implement these tools or others into your business. Just drop us a line.

Happy Flowing!